Reaches, Steals, and Who's Actually Right: Big Boards vs. NFL Teams in the Draft

Ames · Apr 29, 2025

Draft week is over, and that means it's time for the annual ritual: every major outlet slaps a letter grade on every team's class within 48 hours of the final pick, and the internet lights up with arguments. This year I want to push back on something about how those grades work — and back it up with actual data.

1. The Big Board Problem

1.1. Everyone Has a Big Board

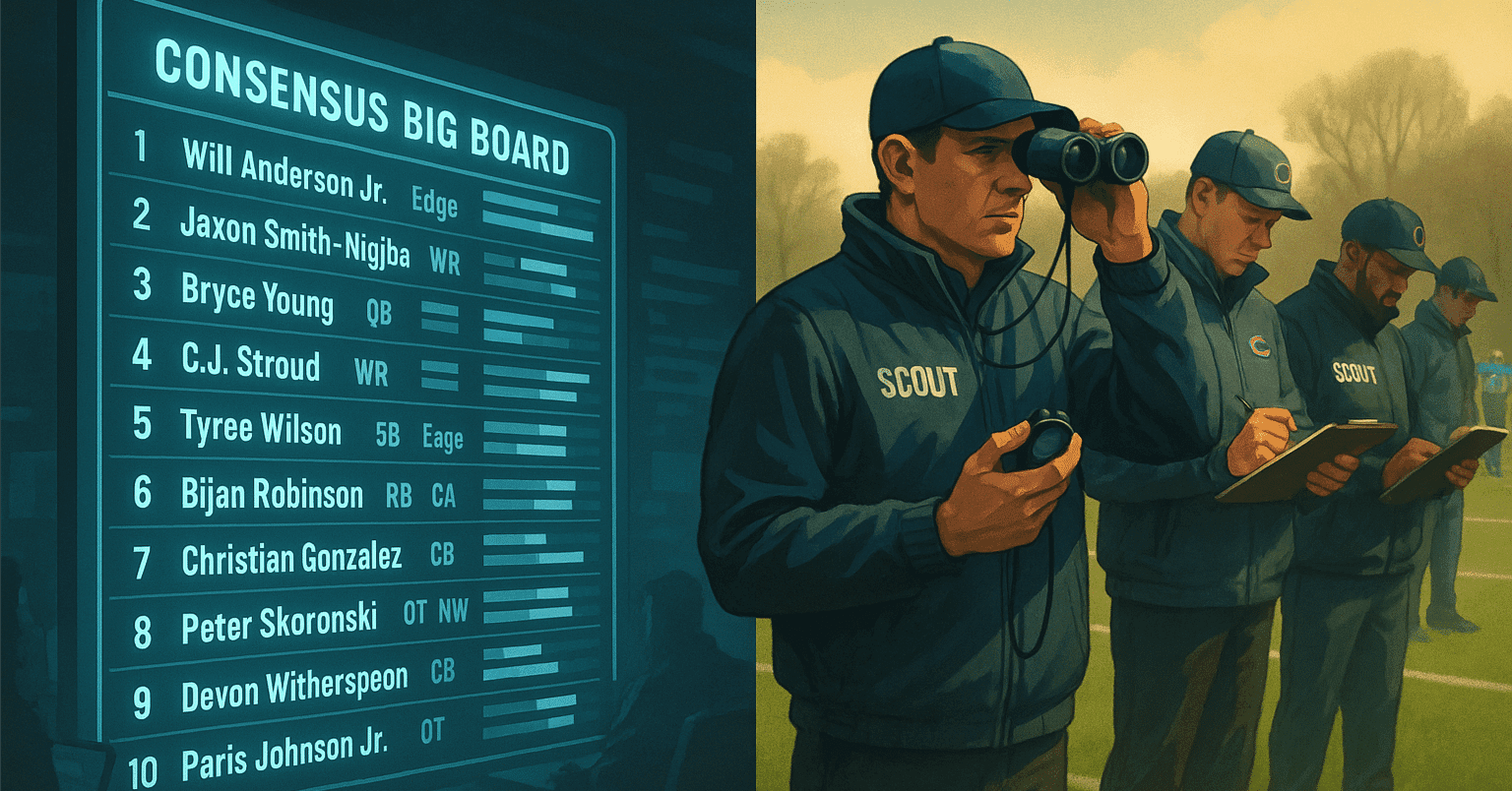

As the NFL Draft approaches, it becomes impossible to avoid prospect rankings. People who follow college football religiously, casual fans who only watch NFL — everyone wants to know who the top prospects are, how their team's needs line up, and which players are flying under the radar. Mock drafts are everywhere, and to be fair, our own site has a Mock Draft Simulator.

The tool everyone uses to answer those questions is the big board — a ranked list of prospects put together by scouts, analysts, and media organizations. In Japanese NFL Twitter circles, tamago's board is the gold standard: detailed, annotated, and updated thoughtfully. I read it every year.

The problem: there are over 200 big boards out there. Individual analysts have preferences, access differences, and positional biases. Some boards are radically different — last year's JJ McCarthy (MIN) rankings ranged all over the place, and this year Shedeur Sanders had outlets ranging from QB1 to late third round (though I don't think anyone predicted the fifth round).

1.2. Consensus Big Boards

The solution that emerged over the last decade: aggregate the boards. Collect as many as possible, average them out, and produce a "consensus big board" that smooths out individual biases. A few notable examples:

- Wide Left by Arif Hasan — The pioneer, going back to 2014 when consensus boards barely existed. Methodology is fully public, and it improves every year. The 2025 edition weights 112 analysts by historical accuracy.

- NFL Mock Draft Database — Aggregates 199 big boards and 3,000 mock draft results. Massive scale, comes with a simulator.

- Lichtenstein Board by Jack Lichtenstein — Built from 16 major media outlets only. Cleaner, more legible than the larger aggregators.

The irony: consensus big boards have become so popular that there are now about ten of them, and they don't always agree. We've reached a point where you need a consensus of the consensus boards.

My personal recommendation is Wide Left — not just because it's the oldest, but because Hasan actually does the work of validating his own predictions. He publishes annual retrospectives:

- Did big board rankings or actual NFL picks better predict player value? (the focus of today's piece)

- Grading the individual big boards for accuracy

- How "Forecasters" (media) and "Evaluators" (draft analysts) differ in their assessments

That kind of systematic self-evaluation is rare. He's not just publishing rankings — he's checking whether his rankings were right and updating the model accordingly.

2. The Research: Testing "Reaches" and "Steals"

Example: A player projected 17th on the big board who Baltimore selected at 59th — categorized as a "steal"

Example: A player projected 17th on the big board who Baltimore selected at 59th — categorized as a "steal"

Every draft, I find myself increasingly frustrated by the letter-grade coverage. Almost none of it uses data. The implicit logic tends to be: "we had this player ranked high, the team picked him high, A+; we had this player ranked low, they wasted a pick on him, D." That's circular reasoning — you're grading teams based on how closely they agreed with your own board.

If a team and the big board disagree, the media-appropriate response isn't "what a bad pick." It's "maybe our evaluation was off." (To his credit, tamago consistently publishes coverage percentages — "my board covered X% of actual picks" — which is exactly the right instinct.)

So, putting my own assumptions to the test: I've always believed NFL teams — who spend millions on scouts, conduct private workouts and interviews, and have detailed scheme knowledge — should be significantly better at evaluating players than external analysts. That belief leads to two hypotheses:

Hypothesis 1: Players labeled "reaches" on draft night end up performing at or near their pick value anyway.

Hypothesis 2: Players labeled "steals" end up not being that special after all.

To test these, I pulled from three published analyses — all using Hasan's consensus big board as the baseline:

3. Results

3.1. The Consensus Big Board Is About as Accurate as the NFL's Own Picks (Through Round 3)

From the Riske piece at PFF: across the first three rounds, the consensus big board and the NFL's actual picks produce nearly identical total player value. The NFL is marginally better overall, but the gap is small enough to be within noise.

Career PFF WAR plotted for "top n picks" vs. "top n board players" — the lines track closely. Free to read up to this chart (from PFF)

Career PFF WAR plotted for "top n picks" vs. "top n board players" — the lines track closely. Free to read up to this chart (from PFF)

Two nuances worth noting: In the first round, the NFL's advantage looks larger, but this is mostly because big boards historically undervalue QBs — when you filter to non-QBs, the gap largely disappears. And in rounds 4+, the NFL advantage widens — but that's less about superior evaluation and more about opportunity: a player the board has ranked who goes undrafted has essentially no chance to prove value, while a player who gets picked in round 5 at least gets a shot.

The Hasan piece adds another layer: when you compare the consensus board to individual analysts, the consensus beats almost everyone. Over the 2016–2022 draft classes, only two individual analysts outperformed consensus on the top 100. (I'd love to know who they are.)

3.2. "Reach" Picks Are Bad at the Rate You'd Expect

Using the Hasan dataset: after computing each player's "true value" from career PFF WAR (adjusted for position and snaps), the analysis checks whether the NFL or the consensus board was closer to that true value for players where the two diverged significantly.

Here's what it looks like for Patrick Mahomes, for reference:

Patrick Mahomes (2017 Draft)

- Consensus big board: 30th

- NFL's actual pick: 10th

- True career value rank: 1st

NFL was right. Now aggregating across all players who were "reaches" — defined as picks made at a spot worth less than 85% of the player's board rank (i.e., substantially higher than consensus):

In the first round, the consensus board was right about reaches roughly 80% of the time. This doesn't necessarily mean those players were busts — just that they didn't justify the pick value the team assigned them.

In rounds 2–3, the split gets more interesting: the board still wins more often than not, but the margin shrinks. This is where teams with proprietary information — character background, private workout results, scheme fit data — start to show an edge that external analysts can't replicate.

(Definition note: using pick value instead of raw pick number matters here. A team taking the #1 overall player ranked 31st on the board is a much bigger reach than a team taking the #100 overall player ranked 130th — even though both involve a 30-spot gap. The 85% threshold captures this: in the early picks, half a round off counts as a reach; in the middle rounds, a full round off is needed.)

3.3. "Steals" Are Overrated

For "steals" — players picked at a spot worth more than 115% of their board rank, meaning the team picked them significantly later than consensus would suggest:

The actual pick value was right far more often than the board. Even through three rounds, the consensus board was right less than 20% of the time on steals. Which means: when 31 teams pass on a player and only one takes him late, those 31 teams were probably right.

The Hasan paper does note one caveat: "steal" picks tend to outperform their pick slot, even if they don't reach the board's lofty projection. So it's not that identifying steals is useless — it's that the board's exact projected value is almost always too optimistic. Kyle Hamilton (BAL) is maybe the counter-example that proves the rule: a legitimately transcendent player taken late by one team that clearly knew what they had.

Putting 3.2 and 3.3 together:

- "We're reaching on this guy because our scouts love him" — probably wrong (especially in round 1)

- "They left a steal on the board" — almost certainly wrong

The asymmetry makes intuitive sense. A reach only requires one team to overvalue a player. A true steal requires thirty-one teams to collectively undervalue him. The former happens regularly; the latter almost never does.

4. How to Use Big Boards Going Forward

My original hypotheses were half right: Hypothesis 2 (steals are overrated) held up; Hypothesis 1 (reaches work out) did not.

Here's the practical summary:

- The consensus big board is genuinely accurate through the top 100 — use it as a reference, not just entertainment

- First-round reaches fail at roughly an 80% rate — if your team makes one, temper your excitement

- "Steals" don't usually hit the board's projection — they tend to outperform their pick slot but rarely reach their supposed ceiling

- In rounds 2–3, it's murky — teams with strong scouting infrastructure do have edges that boards can't see

- Don't use any of this to predict what happens with Shedeur Sanders

Thanks for reading — this one ran long even by my standards.

Share

Feedback

Questions, thoughts, or feedback on this article? Feel free to reach out.